Tech

Roblox to require age verification for every user’s chat access in January

Roblox, the hugely popular online platform known for user-generated games and social interaction, is imposing a sweeping new requirement: beginning early January 2026, all users who wish to access chat features must complete an age-check process.

The initiative follows mounting pressure from authorities and parents: the company has faced lawsuits and regulatory scrutiny in the US and elsewhere over child-safety concerns.

As of December 2025, the age-check system will go live in select markets (Australia, New Zealand, Netherlands), and from January the measure will expand globally wherever chat is available.

Users will complete a facial age-estimation or ID verification via a selfie or video prompt, processed by vendor Persona, and then be placed into one of six age brackets: under 9, 9-12, 13-15, 16-17, 18-20, 21 plus.

Once grouped, chat access is limited: for example, a 12-year-old may be allowed to chat only with users aged 15 or younger; an 18-year-old might chat with users 16+ or younger only if designated as a ‘Trusted Connection’.

Roblox emphasises that the camera images/videos will be deleted after processing.

Yet the move raises privacy and implementation questions. The age-estimation technology is less reliable at the extremes of age ranges, as noted by experts.

The broader context: Roblox has previously rolled out age-estimation and verification tools (e.g., earlier in 2025 for teens and trusted connections).

For creators, players and parents, the change signals a shift: chat features will no longer be uniformly available, and users may need to verify their age before fully engaging socially. Roblox aims to make its platform safer for younger users, and to show regulators that it is responding. But some critics argue the technology may misclassify users or create friction.

In sum, Roblox’s upcoming age-check requirement marks a milestone in how online gaming platforms attempt to safeguard minors, enforce age-appropriate interaction, and respond to legal and reputational risks. Whether the system will seamlessly balance safety, privacy and user experience remains to be seen.

Gulf news

Tech

The medieval secrets being revealed by AI

Historic messages and documents obscured by incomprehensible ciphers can be found in libraries and archives all over the world. Artificial intelligence is helping historians crack open these mysterious texts.

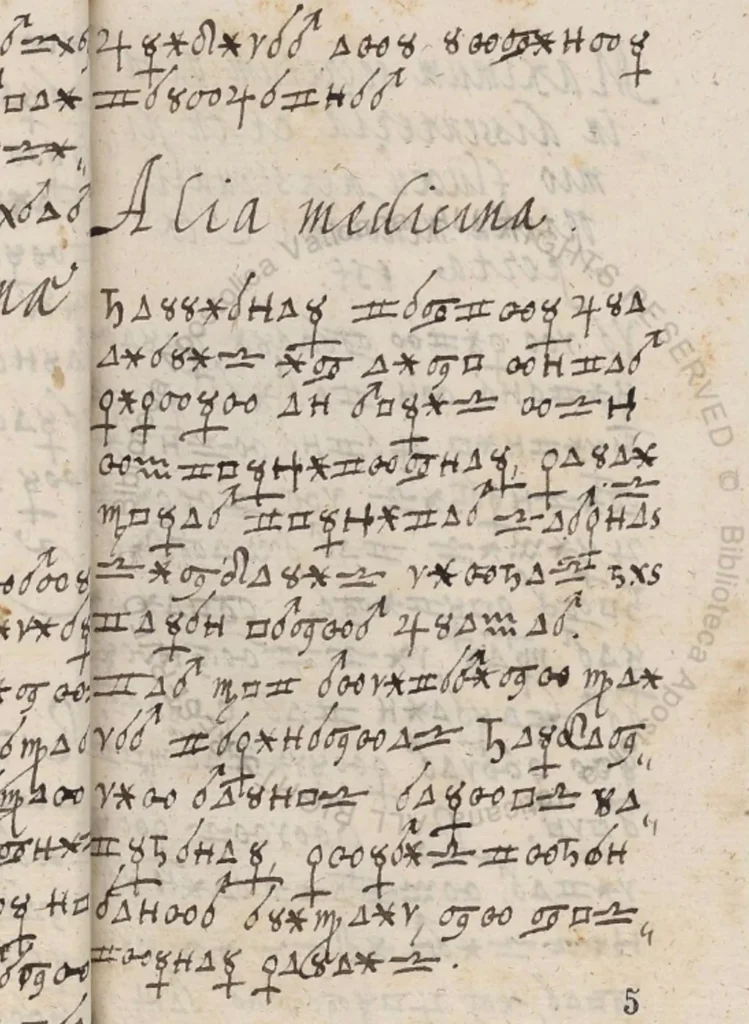

Deep in the archives of the Vatican library, a mysterious hand-written book, scrawled with strange symbols, had lain unread for more than 400 years. Its cryptic pages apparently concealed secret remedies “for affections of the human body”, according to some text scratched inside the cover. Such healing practices were kept under wraps at the time since they could attract suspicion or even accusations of witchcraft.

Known as the Borg cipher, the 408-page-long manuscript is mostly incomprehensible – coded using 34 obscure symbols with a few Roman letters and a front page written in Arabic. There was no known key to reveal what was encrypted. Some of the pages are also damaged due to their age, making the code even more challenging to read.

But with the help of machine learning – a form of artificial intelligence – researchers were able to unravel the code. They discovered the text was filled with thousands of bizarre treatments such as drinking several glasses of high-quality red wine or fermenting a nutmeg in some dough to combat dysentery.

“It is like detective work where every symbol, pattern, and partial solution may bring us closer to someone’s secrets and to a lost historical world,” says Beáta Megyesi, a professor in computational linguistics at Stockholm University in Sweden, who was part of the team who decoded the text. Even with the help of AI, the process of unlocking the cipher key was painstaking.

Now Megyesi and her colleagues are leading efforts to harness the power of AI to crack historic ciphers more efficiently, potentially unlocking a wealth of coded information from the past that has previously been uncrackable.

According to some estimates, around 1% of the material in archives and libraries around the world is fully or partially encrypted. Some of the earliest known ciphers date back to Ancient Greece and Rome.

Decoys, dead languages and bad handwriting

Together, coded historic documents conceal diplomatic intelligence, the rituals of secret societies, medical knowledge, love affairs or everyday details that people wanted to keep secret. This is information currently missing from historical narratives. In some cases, decoding these documents has the potential to rewrite what we know about a famous individual or an entire period of history. (One recent cipher to do this were a collection of coded letters that were found to have been written by Mary Queen of Scots during her long imprisonment in England. They revealed her involvement in plots to regain her throne and her tense relationship with her son, James VI of Scotland and future King James I of England.)

Historic ciphers can be relatively simple: the Borg cipher, for example, uses a simple substitution cipher, meaning that each symbol was swapped with a single Roman letter to hide what was written. Others, however, can be difficult to unravel. In some cases, nothing is known about the original language the uncoded text was written in. Extra, meaningless symbols can also be inserted as a decoy to throw off anyone hoping to snoop on the text. In other cases, several signs can be used to represent the same letter.

This can mean a huge amount of work – often involving trial and error – to decode even a small amount of text. It took Cecile Pierrot, a cryptologist at the French National Institute for Computer Science Research (INRIA) in Nancy, France, and her colleagues six months to gradually unravel the key to a 500-year-old letter from Charles V, the Holy Roman Emperor and King of Spain, that had been written using 120 different cipher symbols across three pages. (The decrypted letter revealed Charles V – one of the most powerful men of his time – undone by fear of a plot to kill him. The king was terrified that an Italian mercenary warlord serving the French king, Francis I, was about to assassinate him.)

Before code-breaking can begin, researchers must first painstakingly transform a handwritten cipher into a digital document that can be fed into code-cracking software. Bad handwriting and fading of the ink can make this task even harder.

Pierrot says it typically takes her a day just to transcribe a two-page letter containing symbols that are unfamiliar to her.

How AI is helping speed-read secrets

But AI is starting to speed up the process. Michelle Waldispühl, a professor of German linguistics at the University of Oslo in Norway and her colleagues, recently used an online AI platform called Transkribus to transcribe a secret letter written by nobleman Sigismund Heusner von Wandersleben to the Swedish Lord High Chancellor Axel Oxenstierna in 1637 at the height of the 30 Years’ War, a religious conflict that would ultimately claim millions of lives and devastate huge swathes of Europe.

The tool has been trained on various languages, scripts and handwriting styles that cover several centuries. After the image of a document is uploaded to the system, the AI detects blocks of texts and individual lines before scanning the whole text character by character to turn it into a digital form.

Although some manual corrections were needed, the tool worked quite well on Von Wandersleben’s letter as it was only partly encrypted using numbers separated by dots that were neatly written with clear spaces between them. Other parts were not coded and simply written in 17th-Century German script.

Existing AI transcription platforms often struggle when manuscripts are encrypted with unusual characters, such as invented signs, astrological symbols or numbers that are written in an odd way. But Megyesi, Waldispühl and their colleagues are developing their own AI tool to turn handwritten historical texts with obscure symbols or scripts into machine-readable documents as part of the multinational Descrypt project.

“We are developing more adaptable models trained and tested across a broad range of scripts, alphabets and symbolic repertoires,” says Megyesi.

Once a secret document has been transcribed, the detective work can begin. At the moment, cryptologists often use specially designed non-AI computer software to help with the task which harnesses algorithms to try to determine what cipher was used and break the code. Simple ciphers can often be cracked by analysing the frequency of symbols used and matching them to letters of the alphabet that appear at the same rate in a language. In English, for example, the letter E is the most common while Z, Q and X are the least frequent.

But in Von Wandersleben’s letter from the frontlines of the 30 Years’ War, for example, he used up to eight different symbols to represent the letter E. It meant trial and error, as well as Waldispühl‘s knowledge of old German, was needed to gradually unpick the code.

“It was very much back and forth between the machine and the human validator,” says Waldispühl. “Maybe at some point AI can do it completely independently.”

Hidden behind the cipher were Von Wandersleben’s warnings about the threat posed by factions of Sweden’s protestant allies in the war. He told Oxenstierna that he had been forced to make strategic retreats from the conflict after being told about a conspiracy among his allies, including Lord Franz Heinrich of Saxony.

Reopening cold case codes

Megyesi and her team are now exploring how AI could skip the transcription stage all together, simply by analysing photos of the pages to decipher secret messages. They recently showed how the approach could work for simple codes, where every letter is replaced by a single symbol.

They tested the system on a 105-page manuscript they had already decoded, known as the Copiale cipher, which details the rituals, rules and ideals of an 18th-Century German secret society. By training the AI on generic handwriting, followed by images of specific lines from the cipher and the corresponding, decoded German text, the system was able to accurately decipher parts of the text it hadn’t seen before.

Such a system could especially be useful when the underlying language of a cipher is unknown.

“This opens up exciting possibilities for rare and non-standard writing systems,” says Megyesi. “The ultimate goal is to combine transcription and decipherment in one single step.”

Waldispühl and her colleagues in the Descrypt project have been scouring old archives in search of cipher scripts to compile into a database. This could prove vital as a way of gathering sufficient data to train an AI capable of cracking codes. Large language models that underpin AI chatbots such as ChatGPT are trained on trillions of words from books, articles and websites. Finding equivalent amounts of data for code cracking is challenging.

Amongst the material they have collected are 400 mysterious postcards written in cipher script from the late 1800s to early 1900s. The few scraps decoded so far reveal some of these to be love letters written in German.

Megyesi’s team have used their work to create an AI chatbot-style tool that combines transcription and decryption in a single step. The chatbot combines algorithms for decryption trained on pairs of cipher characters and the text they represent with large language models trained on historical texts from different time periods to help provide clues about a code. Image recognition algorithms, trained on annotated handwriting, are also being incorporated. The AI tool will also be able to improve itself by incorporating corrections from experts that use it.

The idea would be that researchers, or even the public, could give the chatbot a coded, historical text and have it reveal what is written.

When the researchers tested their AI chatbot with the Borg cipher, Megyesi and her colleagues found it could translate and decode a 500 symbol extract in a little over 29 minutes. It even provided an English translation. It also documented the process and explained why the solution was plausible. This is important to make sure that the AI is not hallucinating or inventing interpretations.

The team also recently tested the system with two other ciphers they had previously decoded which represent different time periods, languages, types of secret codes and levels of complexity. It quickly decrypted them too, showing that it is capable of tackling a range of ciphers.

“AI helps most with scale, speed, pattern discovery and integration of tasks,” says Megyesi.

Such AI tools could be key to cracking historical ciphers that have been elusive to date. They will also help with ancient texts written in alphabets that nobody can read today. The 4,000 year old Phaistos Disc from Crete, for example, remains undeciphered as does the early Greek language “Linear A”.

“What excites me is not only the possibility of solving one specific historical puzzle, but the prospect of creating methods that can assist researchers across many different cases,” says Megyesi.

BBC

Business

EDECS and Assarain Group Awarded Strategic Dry Port & Veterinary Quarantine Development at EZAD IP3 by OPAZ in the Sultanate of Oman

EDECS group, , a leading EPC contractor in the MEA region, has been awarded the Construction of the Dry Port and Veterinary Quarantine at the Economic Zone in Al Dhahirah (EZAD) – IP3,Ibri provence, Al Dhahirah Governorate, Sultanate of Oman, by the Public Authority for Special Economic Zones and Free Zones (OPAZ), one of the Sultanate of Oman’s strategic economic projects aimed at advancing the objectives of Oman Vision 2040, with Assarain Group (Said Salem Al Wahaibi Group) as EDECS’ local partner in Oman. The award marks a strategic milestone in EDECS Group’s continued expansion across the GCC region and reinforces the Group’s growing role in delivering large-scale logistics and infrastructure developments.

The signing attended by Saudi and Omani government ministers and authorities, prominent public and private sector representatives, and key stakeholders from across the region, reflecting the strategic importance of the project to Oman’s future economic and infrastructure landscape.

Located in Ibri provence, Al Dhahirah Governorate, the Economic Zone at Al Dhahirah spans approximately 388 square kilometers and enjoys a strategic location approximately 20 kilometers from the Rub Al Khali border crossing with the Kingdom of Saudi Arabia and around 105 kilometers from Ibri Industrial City. The project is designed to serve as a major economic and logistics gateway that strengthens regional trade connectivity and supports economic integration between Oman, Saudi Arabia, and neighboring markets.

The development of the integrated economic zone aligns closely with Oman Vision 2040, the Sultanate’s long-term roadmap for sustainable development and economic diversification. The project is expected to contribute significantly to enhancing trade movement, attracting investments, supporting industrial and logistics activities, and creating new economic opportunities that reduce dependence on oil revenues while driving sustainable growth across the Sultanate.

The scope of work encompasses the full development cycle of the Dry Port project within the Economic Zone at Ibri provence, Al Dhahirah (EZAD), including enabling works, earthworks, infrastructure development, utility networks, and the construction of all operational and supporting facilities such as administration buildings, X-ray facilities, accommodation buildings, and associated structures, through to testing, commissioning, and final fit-out, delivering a fully integrated and operational logistics asset.

Commenting on the signing, Eng. Hussein El Dessouky, Chairman and Managing Director of EDECS Group, said:

“We are proud of our partnership with our client, the Public Authority for Special Economic Zones and Free Zones in the Sultanate of Oman on this strategic project, which marks an important milestone in EDECS Group’s regional expansion and its long-term commitment to delivering transformative infrastructure projects across the GCC countries.

The Economic Zone at Al Dhahirah is a highly promising project with significant economic and logistical potential, and we are confident that this collaboration with OPAZ will contribute meaningfully to Oman Vision 2040 by enhancing trade connectivity, attracting investments, and creating sustainable economic opportunities for the Sultanate and the wider region.”

Al Sheikh Salem Bin Said Al Wahaibi, Chairman of Assarain Group, stated:

“This agreement reflects Assarain Group’s commitment to diversifying the Omani economy and strengthening the Sultanate’s position as a strategic regional commercial and logistics hub. As a diversified group that has played a vital role in the country’s economic and social development since 1975, we remain committed to delivering excellence and innovation across our various sectors, enhancing economic competitiveness, and fostering confidence and growth in economic, social, and developmental relations across the Sultanate.

Thanks to the wise leadership and clear strategic vision of His Majesty, Sultan Haitham bin Tariq, Oman has transformed global economic challenges into promising investment opportunities through flexible policies focused on diversification, financial sustainability, and empowering the private sector as a key partner in development.

We are also proud to collaborate with EDECS Group, an EPC leader in the MEA region with extensive experience in executing complex and high-value projects. EDECS brings strong technical capabilities that are essential for this development, and we take pride in this partnership as we work together to deliver a project that creates long-term value for Oman and supports the goals of Oman Vision 2040.”

This milestone reflects EDECS Group’s position as a Grade A, EPC contractor, recognized for its innovative engineering capabilities, strong execution expertise, and unwavering commitment to the highest safety standards. EDECS Group has been present in the KSA since 2013 across the Eastern and Western Provinces, and continues to deliver complex projects across the MEA region in line with its strategic vision of selectively pursuing high-impact developments that create long-term value and support sustainable economic growth.

- END –

Tech

Humanoid robots trialed as airport baggage handlers in Japan

Japan’s famously conscientious but overburdened baggage handlers will soon be joined by extra staff at Tokyo’s Haneda airport – although their new colleagues will need to take regular recharging breaks.

Japan Airlines will introduce humanoid robots on a trial basis from the beginning of May, with a view to deploying them permanently as a solution to the country’s chronic labour shortage.

The Chinese-made humanoids will move travellers’ luggage and cargo on the tarmac at Haneda, which handles more than 60 million passengers a year.

JAL and its partner in the initiative, Japan Airlines GMO Internet Group, hope the experiment – which ends in 2028 – will lessen the burden on human employees amid a surge in inbound tourism and forecasts of more severe labour shortages.

In a demonstration for the media this week, a 130cm-tall robot manufactured by Hangzhou-based Unitree was seen tentatively “pushing” cargo on to a conveyer belt next to a JAL passenger plane and waving to an unseen colleague.

The president of JAL Ground Service, Yoshiteru Suzuki, said using robots to perform physically demanding work would “inevitably reduce the burden on workers and provide significant benefits to employees”, according to the Kyodo news agency.

Suzuki added, however, that certain key tasks – such as safety management – would continue to be performed by humans.

Japan is struggling to cope with a simultaneous surge in tourists from overseas and an ageing, declining population.

More than 7 million people visited the country in the first two months of 2026, according to the Japan National Tourism Organisation, after a record 42.7 million last year, despite a drop in the number of visitors from China triggered by a diplomatic row between Tokyo and Beijing.

According to one estimate, Japan will need more than 6.5 million foreign workers in 2040 to reach its growth targets as the indigenous workforce continues to shrink. The country’s foreign population has risen dramatically in recent years, but the government is now under political pressure to rein in immigration.

The president of GMO AI and Robotics, Tomohiro Uchida, said: “While airports appear highly automated and standardised, their back-end operations still rely heavily on human labour and face serious labor shortages.”

Robots can operate continuously for two to three hours and the firms are planning to use them to perform other tasks, such as cleaning aircraft cabins.

The Guardian

-

Discover5 months ago

Discover5 months agoIs February 2026 really a once-in -283-years MiracleIn?

-

Entertainment4 months ago

Entertainment4 months agoNetflix to Livestream BTS Comeback Concert

-

Football6 months ago

Football6 months agoAlgeria, Burkina Faso, Côte d’Ivoire win AFCON 2025 openers

-

Health5 months ago

Health5 months agoNMC Royal Hospital, Khalifa City, performs rare wrist salvage, restoring function for young patient

-

Health6 months ago

Health6 months agoBascom Palmer Eye Institute Abu Dhabi and Emirates Society of Ophthalmology Sign Strategic Partnership Agreement

-

Health7 months ago

Health7 months agoEmirates Society of Colorectal Surgery Concludes the 3rd International Congress Under the Leadership of Dr. Sara Al Bastaki

-

Lifestyle7 months ago

Lifestyle7 months agoSaudi Arabia Lifestyle Trends 2025: What You Need to Know About Fitness, Wellness, Healthy Eating & Self-Care Growth

-

Health7 months ago

Health7 months agoBorn Too Soon: Understanding Premature Birth and the Power of Modern NICU Care